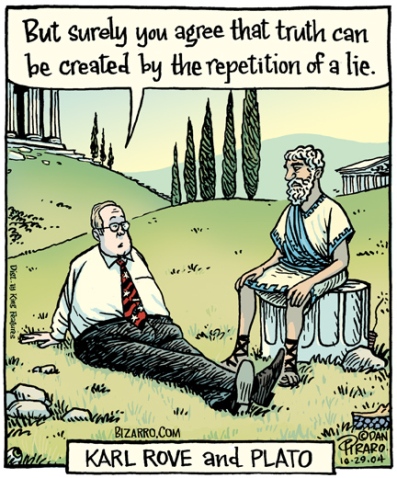

Courtesy Dan Piraro, Bizarro.com

“It ain’t what you don’t know that gets you into trouble. It’s what you know for sure that just ain’t so.” – Mark Twain

In their 2010 report, When Corrections Fail: The Persistence of Political Misperceptions, Brendan Nyhan and Jason Reifler write, “To date, the study of citizens’ knowledge of politics has tended to focus on questions like veto override requirements for which answers are clearly true or false. As such, studies have typically contrasted voters who lack factual knowledge (i.e. the ‘ignorant’) with voters who possess it. But as Kuklinski et al. note (2000), some voters may unknowingly hold incorrect beliefs, especially on contemporary policy issues on which politicians and other political elites may have an incentive to misrepresent factual information. …

“Political beliefs about controversial factual questions in politics are often closely linked with one’s ideological preferences or partisan beliefs [confirmation bias]. As such, we expect that the reactions we observe to corrective information will be influenced by those preferences. … As such, we expect that liberals will welcome corrective information that reinforces liberal beliefs or is consistent with a liberal worldview and will disparage information that undercuts their beliefs or worldview (and likewise for conservatives). … However, individuals who receive unwelcome information may not simply resist challenges to their views. Instead, they may come to support their original opinion even more strongly — what we call a ‘backfire effect.’ ”

In The Debunking Handbook Part 2: The Familiarity Backfire Effect, authors John Cook, Stephan Lewandowsky write, “To test for this backfire effect, people were shown a flyer that debunked common myths about flu vaccines. Afterwards, they were asked to separate the myths from the facts. When asked immediately after reading the flyer, people successfully identified the myths. However, when queried 30 minutes after reading the flyer, some people actually scored worse after reading the flyer. The debunking reinforced the myths.

“Hence the backfire effect is real. The driving force is the fact that familiarity increases the chances of accepting information as true. Immediately after reading the flyer, people remembered the details that debunked the myth and successfully identified the myths. As time passed, however, the memory of the details faded and all people remembered was the myth without the ‘tag’ that identified it as false. This effect is particularly strong in older adults because their memories are more vulnerable to forgetting of details,” [proving Dan Piraro’s comic panel “Karl Rove and Plato” true!]

In Skeptical Science (July 2014), David McRaney notes, “When your deepest convictions are challenged by contradictory evidence, your beliefs get stronger.”

However, Cultural Studies analyst Jamie O’Boyle says that “this automatic bias can be modified if you slow down the process.”

Science Daily (Nov. 2, 2012) states, “Liberals and conservatives who are polarized on certain politically charged subjects become more moderate when reading political arguments in a difficult-to-read font, researchers report in a new study.

“The study is the first to use difficult-to-read materials to disrupt what researchers call the ‘confirmation bias,’ the tendency to selectively see only arguments that support what you already believe, psychology Professor Jesse Preston said.

“The new research, reported in the Journal of Experimental Social Psychology, is one of two studies to show that subtle manipulations that affect how people take in information can reduce political polarization. The other study, which explores attitudes toward a Muslim community center near the World Trade Center site, is described in a paper in the journal Social Psychological and Personality Science.

“The study is the first to use difficult-to-read materials to disrupt what researchers call the ‘confirmation bias,’ the tendency to selectively see only arguments that support what you already believe, Preston said. And it is the first to show that the intervention can moderate both deeply held political beliefs as well as newly formed biases, she said.

“ ‘Not only are people considering more the opposing point of view,’ Preston said, “but they’re also being more skeptical of their own because they’re more critically engaging both sides of the argument.’

“ ‘We showed that if we can slow people down, if we can make them stop relying on their gut reaction — that feeling that they already know what something says — it can make them more moderate; it can have them start doubting their initial beliefs and start seeing the other side of the argument a little bit more,’ Ivan Hernandez said.

In an opinion for The New York Times (Dec. 11, 2015), fact-checker Angie Drobnic Holan writes that Bernie Sanders closely followed by Hillary Clinton rate the highest (at 54 and 51 percent, respectively) regarding truthful statements, (scroll down the link to view the chart of all candidates).

So, what does all this mean?

Just this: when it comes to personal ideology – religious, political, psychological or social – the more closely held the belief, the less likely you are to change, despite factual evidence to the contrary. This makes it even more difficult to live up to the ethical value of fairness: being open and impartial in gathering and evaluating information necessary to making decisions.

The answer, according to Professor Jesse Preston, would be to slow down and carefully review as much information, particularly opposing information, before making a decision.

Comments

Leave a Comment

“What does all this mean?” It means relatively NOTHING when its conclusion suggests that Hillary Clinton is among the most honest of current politicians (the “study” thankfully excluding he who said, “If you like your doctor, you can keep your doctor” and “I am drawing a red line in the sand.”). The current e-mail situation is in direct contradiction to her intrinsic honesty which was never her forte since her Watergate days and I sincerely hope the FBI goes forward with an indictment. Finally, when one checks on Angie Drobnic and just who and what is PolitiFact, the amazing revelation appears that they/it are far-left and always have been. So, in keeping with your admonition Jim, I am “reviewing the information before making a decision.” It is time to check the “fact-checkers,” as they INDEED, have an agenda, right down to where their money comes from and whom they tout as “most honest.” Perhaps that could be the next deep, long, essay. And yes, it is nice to see “comments” appear to assure us that live people are really out there and are reading your in-depth studies.

Always a fascinating read, Jim, and thanks for including my comic. It’s good to know that there is real science behind what I only intuited from the Bush administration and the early years of Faux News. I find it disheartening, however, that the more we learn about the human mind the more we find that political beliefs become almost pathological and thus nearly impossible to change, no matter how nonsensical they may be.